How To Choose An Algorithm For Predictive Analytics

We are living in a highly advanced technological period of human existence. At this time, the internet is faster than the speed of light, memory storage and computing power have moved to the cloud. Every day, things which were previously only possible in the realm of “sci-fi” are now part of our daily lives. Included in this category is a very advanced technique and tool called “predictive algorithms”. Predictive algorithms have revolutionized the way we view the future of data and has demonstrated the big strides of computing technology.

In this blog, we’ll discuss criteria used to choose the right predictive model algorithm.

But first, let’s break down the process of predictive analytics into its essential components. For the most part, it can be dissected into 4 areas:

- Descriptive analysis

- Data treatment (Missing value and outlier treatment)

- Data Modelling

- Estimation of model performance

- Descriptive Analysis: In the beginning, we used to primarily build models based on Regression and Decision Trees. These are the algorithms which are mostly focusing on interest variable and finding the relationship between the variables or attributes.

Introduction of advanced machine learning tools made this process easy and quicker even in very complex computations.

- Data Treatment: This is the most important step in generating an appropriate model input, So, we should have a smart ways to make sure it’s done correctly. Here are two simple tricks which you can implement:

- Create dummy flags for missing value(s): In general, once we discover the missing values in a variable, that can also sometimes carry a good amount of information. So, we can create a dummy flag attribute and use those in the model.

- Impute missing value with mean/any other simple value: In basic scenarios, the imputation of ‘mean’ or the ‘median’ works fine for the first iteration in a specific situation. In other cases where there is a complex data with trend, seasonality and lows/highs, you probably need a more intelligent method to resolve for missing values.

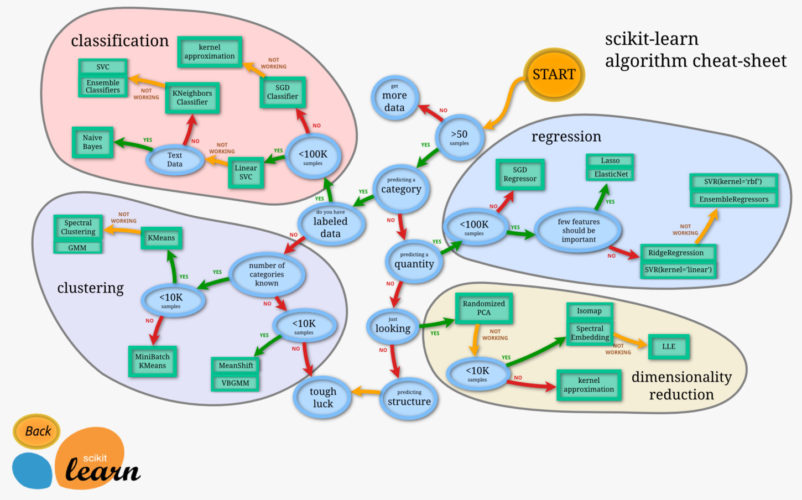

- Data Modelling: Generalized Boosting Modules (GBM) can be extremely effective for 100,000 observation cases. In cases of larger data, you can consider running a Random Forest. The below cheat-sheet will help you to decide which method to use and when.

Please click here or click the picture to get the source of the below cheat-sheet.

- Estimation of Performance of the Model: The problem of predictive modeling is to create models that are good at making predictions on new unseen data.

Therefore, it is critically important to use robust techniques to train and evaluate your models on your available training data. The more reliable your performance estimation, the more accurate the model.

There are many model evaluation techniques that you can try in R-Programming or Python. Below are some of them:

- Training Dataset: Prepare your model on the entire training dataset, then evaluate the model on the same dataset. This is generally problematic because a perfect algorithm could skew this evaluation technique by simply memorizing (storing) all training patterns and achieve a perfect score, which would be misleading.

- Supplied Test Set: Split your dataset manually using another program. Prepare your model on the entire training dataset and use the separate test set to evaluate the performance of the model. This is a good approach if you have a large dataset (many tens of thousands of instances).

- Percentage Split: Randomly split your dataset into a training and a testing partitions each time you evaluate a model. It’s is usually in the split ratio of 70-30% of the data. And this can give you the more significant estimate of performance and like using a supplied test set is preferable only when you have a large dataset.

- Cross-Validation: Split the dataset into k-partitions or folds. Train a model in all possible aspects of data except one that is held out as the test set, then repeat this process creating k-different models and give each fold a chance of being held out as the test set. Then calculate the average performance of all k models.

This is one of the traditional and standard methods for evaluating model performance, but yeah somewhat time-consuming and has to create n-number of models to achieve the accuracy.

Conclusion:

Ultimately, the work that goes into selecting algorithms to help to predict future trends and events is worthwhile. It can result in better customer service, improved sales, and better business practices. Each of these things can, of course, result in increased profits or lowered expenses. Both are desirable outcomes. The information above should act as a bit of a primer on the subject for those new to using analytics.

What challenges do you or your company have in choosing the right predictive modeling algorithm or in model performance estimation? Share your story in the comments below!

We hope you like the blog and share it with your network. Please reach out to sales@bistasolutions.com for any query pertaining to Predictive Analytics solutions.