How Hadoop Can Overcome the Challenges with Big Data Analytics

No doubt that the new wave of big data is creating new opportunities but at the same time it is also creating new challenges for businesses across all industries. Data integration is one of the important challenges that many IT Engineers are currently facing. The major problem is to incorporate the data from social media and other unstructured data into a traditional BI environment.

Here we have discovered a robust solution to overcome data-related challenges.

We are talking about “Hadoop”, a cost-effective and scalable platform for BigData analysis. Using the Hadoop system instead of Traditional ETL (extraction, transformation, and loading) processes gives you better results in less time. Running of Hadoop Cluster efficiently implies selecting an optimal framework of servers, storage systems, networking devices, and soft wares.

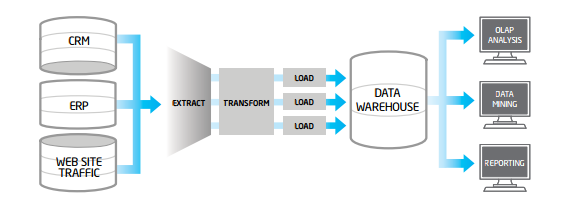

Generally, a typical ETL process will extract data from multiple sources, then cleanse, format, and loads it into a data warehouse for analysis. When the nature of source data sets is large in size, fast-growing, and not in a structured format, traditional ETL can become the bottleneck, because of its complex, expensive,e and time-consuming process to develop, operate and execute.

Fig #1: Depicts the Traditional ETL Process

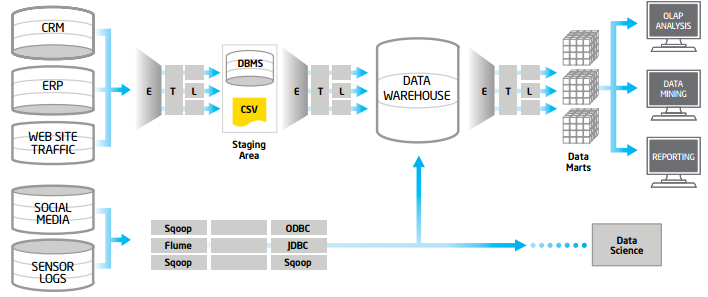

Fig#2: Depicts ETL offload Hadoop.

Apache Hadoop for Big Data

Hadoop is an open-source framework that is based on a java programming model that supports the processing and storing of large data sets in a distributed computing environment. It runs on a cluster of commodity machines. Hadoop allows you to store petabytes of data reliably on a large number of servers while increasing performance cost-effectively, by just adding inexpensive nodes to the cluster. The reason for the scalability of Hadoop is the distributed processing framework known as “MapReduce”.

MapReduce is a method to process large sums of data in parallel while the developer only has to write two codes which are “Mapper” and “Reduce”. In the mapping phase, MapReduce takes the input data and assigns every data element to the mapper. In the reducing phase, the reducer combines all the partial and intermediate outputs from all the mappers and produces a final result. MapReduce is an important advanced programming model because it allows engineers to use parallel programming constructs without having to know about the complex details of intra-cluster communication, monitoring the tasks, and handling failures.

The system breaks the input data set into multiple chunks, and each one of them is assigned a map task that processes the data in parallel. The map function will read the input in the form of (key, value) pairs and produce a transformed set of (key, value) pairs as the output. During the process outputs of the map, tasks are shuffled and sorted and the intermediate (key, value) pairs will be sent to the reduced tasks, which will group the outputs into the final results. To perform processing using MapReduce, the JobTracker and TaskTracker mechanisms are used to schedule, monitor, and restart any of the tasks that fail.

The Hadoop framework includes the Hadoop Distributed File System (HDFS) which is a specially designed file system with a streaming access pattern and fault tolerance capability. HDFS stores a large amount of data. It divides the data into blocks (usually 64 or 128 MB) and replicates the blocks on the cluster of machines. By default, three replications are maintained. Capacity and performance can be increased by adding Data Nodes, and a single Name Node mechanism.

For more information or Implementation services, you can contact our expert at sales@bistasolutions.com or call on USA: +1 (858) 401 2332